The Trusted Data Observatory will serve as a central hub, making reliable information easier to find, understand, and use.

For a world where trusted data is accessible to all, empowering informed decisions and countering misinformation.

CONTEXT

The challenges of accessing and using reliable data

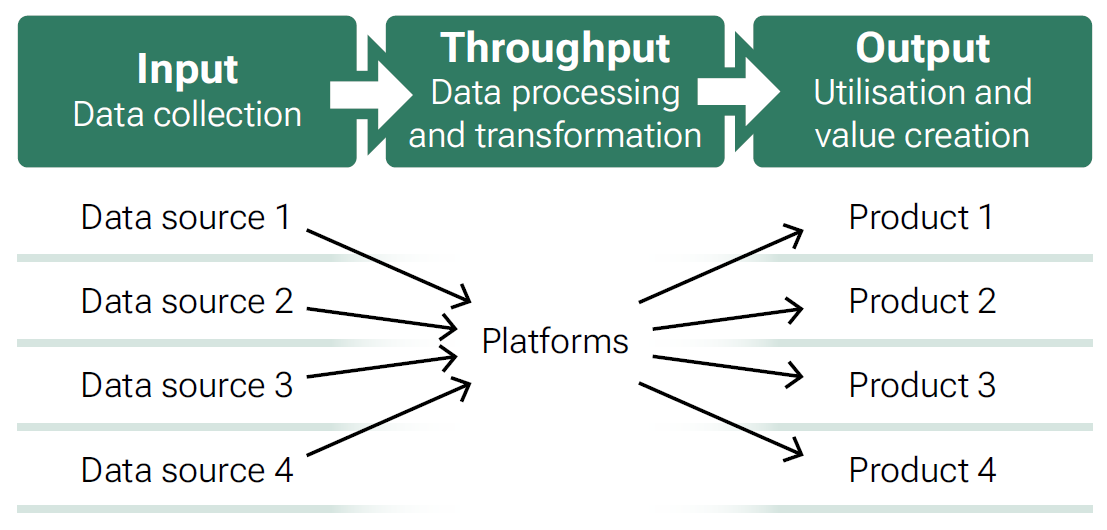

Data is the starting point for information, knowledge and insight. Finding the data you need when you need it, especially from trusted or official data sources, can be difficult. Lexical search engines and AI-enabled search engines can compile such data in a very short time, but they collect the information from any text sources of the world wide web. Moreover, data so far mostly exists in separated areas, in so-called silos. The data is often collected or gathered for a specific purpose. It can often only be used for this purpose.

What is metadata and why does it matter for data discovery?

Metadata is “data about data”, it is the information that describes the content, structure, quality, and origins of a dataset. It is the foundation that makes data discoverable, understandable, and comparable across systems.

In today’s AI‑enabled environment, metadata is no longer secondary. It is the entry point: if data cannot be found or interpreted by humans and machines, it cannot be trusted, reused, or integrated into decision‑making.

High‑quality datasets, such as official statistics, may contain robust information, but often remain invisible to search engines or AI systems. This usually happens because their metadata is fragmented, inconsistently applied, or not fully machine‑readable.

When metadata is open, harmonised, machine‑readable, and optimised for AI, it supports both humans and algorithms to:

- Identify authoritative and trustworthy data sources

- Assess relevance, timeliness, provenance, and quality

- Understand access conditions and usage rights

- Distinguish reliable information from misleading, non‑comparable, or low‑quality content

However, metadata practices vary widely across countries and organisations, and implementation is often decentralised. Even where strong metadata exists, it may not be exposed in a way that supports AI‑driven discovery. As a result, high‑quality information risks being overshadowed by inaccurate or unofficial sources.

In short: metadata matters because it is the gateway to trustworthy data discovery. Without structured and standardised metadata, reliable information cannot compete with misinformation in an AI‑driven digital ecosystem.

Challenge

How do metadata platforms enable data reuse?

Solution

Introducing the Trusted Data Observatory (TDO)

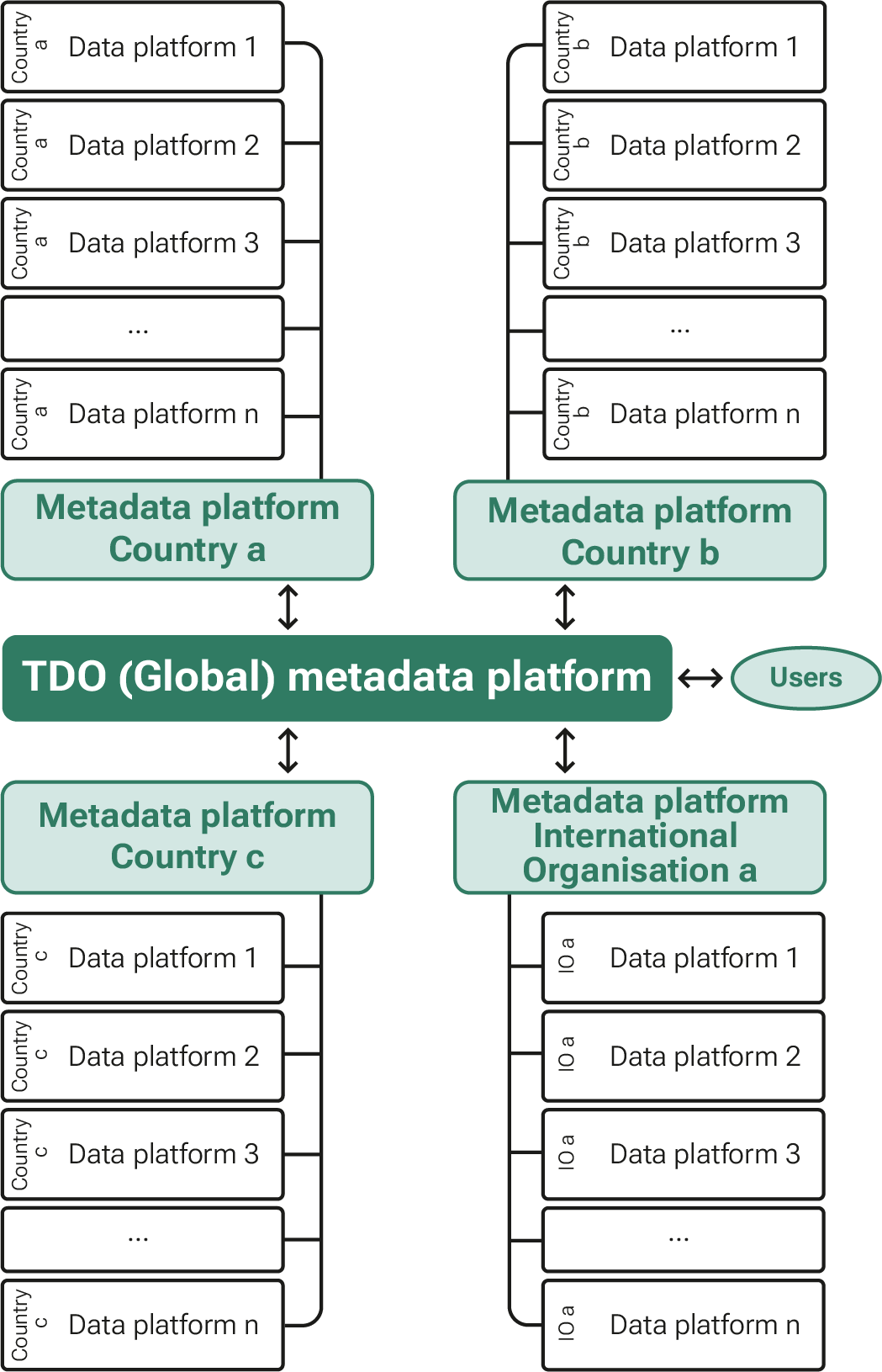

The Trusted Data Observatory (TDO) is a global metadata platform that solves the challenge of data discovery in an AI‑enabled world. Instead of hosting data, TDO describes trusted data using a Minimum Viable Metadata (MVM) model that is standardised, open, machine‑readable, and optimised for both humans and AI systems.

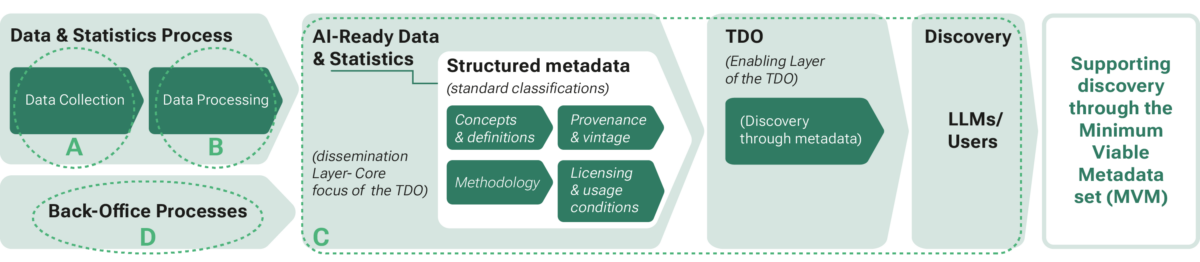

What is AI readiness?

AI-readiness for trusted data and statistics means ensuring that authoritative data and metadata are machine-readable, semantically interoperable, discover- able, and accompanied by clear provenance, vintage, and licensing information so that AI systems can retrieve and interpret them correctly.

It also includes the use of AI-enabled metadata standards – metadata that are structured, enriched, and exposed in ways that allow AI systems not only to discover trusted data sources, but to understand their meaning, context, limitations, and relationships across sources.

In this context, the TDO focuses specifically on the dissemination layer – strengthening discovery, providing details of access protocols for microdata, AI interfaces, and AI-enabled metadata.

Objectives of the TDO

Is a TDO feasible?

In 2024, a feasibility study commissioned by the Swiss Federal Department of Foreign Affairs and the Swiss Federal Statistical Office and conducted by an independent consultant, concluded that the potential added value

of a Trusted Data Observatory is significant.

Since then, the project has progressed into implementation planning, stakeholder engagement, and technical preparation phases. Key challenges identified include metadata quality and heterogeneity, governance and sustaina- bility, and ensuring broad international participation. These challenges are being addressed through a phased, collaborative approach involving national statisti-

cal offices, international organisations, and technical experts.

The Phase 1 report has been completed and is ready to be published on the webpage.

How does the TDO work in practice?

Data on the various national platforms and from international organisations will be made visible through a country’s or international organisation’s metadata platform. The data itself remains on its own platform. If the metadata platforms are harmonised, the Trusted Data Observatory can connect to all these platforms. Thus, all trusted data sets could be made visible in a global platform. Moreover, by focusing on metadata, the data stays on the data producer’s own platform. This means that the Observatory does notmodify data ownership and quality assurance responsibilities, but creates benefits and synergies for all participating data owners.

Benefits

Empowering Access to Trusted Data Through the TDO

Trusted data, which is representative, comparable, harmonized, interoperable, and subject to ethical principles, provides added value for societies and helps them in fact-checking and countering fake news. A global platform could bundle such trusted data from governments and international organisations. It would be able to tell you who has which data sets, how good the quality is, how often they are collected, and whether they are representative. If they are open government data, you could access them directly via an API, otherwise the platform would contain information on where you can request access and, if applicable, who is allowed to link it with other data. By accessing metadata on trusted data in a centralised manner, search engines and AI chatbots would also be able to improve their search results.

Timeline

Phase 1

Stakeholder identification, governance planning, and requirements definition have commenced in close cooperation with international organisations and national statistical offices.

2025 - 2026 (completed)

Phase 2

Agreement on a Minimum Viable Metadata (MVM) set, associated standards, technical specifications, and Proof of Concept (PoC) participants.

2025 - 2026 (completed)

Phase 3

Development of a scalable TDO prototype, implementation of the PoC, training of participants, and engagement with AI and technology stakeholders.

End 2026 – Q1 2027

Phase 4

Review of PoC

Review and refine the TDO post PoC exercise

By end 2027

Phase 5

Broader onboarding, integration with post-2030 agenda, and long-term sustainability.

Onboard key partners (NSI’s and IO’s not involved in PoC) and integrate with the “Post-2030 Agenda”. Build communications and engagement plan from phase 3 to drive engagement of data compilers with the observatory and drive usage of the observatory by the user community. Examine a broader suite of trusted data sources within an agreed framework developed by the governance structure put in place for the TDO.

2027 – 2030

Building the Trusted Data Observatory: A Phased Approach

The feasibility study proposes several steps or phases of development towards full implementation of the Trusted Data Observatory. The first two phases are to be implemented in close cooperation with international organisations (based in Geneva) and national statistical offices from all regions of the world until the end of 2025. Data for Change – the PARIS21 Foundation will coordinate the implementation in Geneva.

Where will it be hosted?

Geneva provides a unique and well-suited environment for hosting the Trusted Data Observatory. It is home to many United Nations bodies and international organisations that are major producers and users of official data, and it offers a long-standing tradition of neutrality and multilateral cooperation. Moreover, Switzerland is currently setting up its own national metadata platform (I14Y), which means that know-how can be easily transferred. While Geneva offers a strong institutional ecosystem for the development of the TDO, its long-term governance will be anchored at the global level, reflecting the Observatory’s international scope, multilateral character, and worldwide reach.